The world has modified dramatically since generative AI made its debut. Companies are beginning to use it to summarize on-line critiques. Shoppers are getting issues resolved by way of chatbots. Workers are conducting their jobs quicker with AI assistants. What these AI functions have in widespread is that they depend on generative AI fashions which were skilled on high-performance, back-end networks within the knowledge middle and served by way of AI inference clusters deployed in knowledge middle front-end networks.

Coaching fashions can use billions and even trillions of parameters to course of huge knowledge units throughout synthetic intelligence/machine studying (AI/ML) clusters of graphics processing unit (GPU)-based servers. Any delays—corresponding to from community congestion or packet loss—can dramatically impression the accuracy and coaching time of those AI fashions. As AI/ML clusters develop ever bigger, the platforms which can be used to construct them must help greater port speeds in addition to greater radices (such because the variety of ports). The next radix permits the constructing of flatter topologies, which reduces layers and improves efficiency.

Assembly the calls for of high-performance AI clusters

In recent times, now we have seen the GPU wants for scale-out bandwidth improve from 200G to 400G to 800G, which is accelerating connectivity necessities in comparison with conventional CPU-based compute options. The density of the info middle leaf should improve accordingly, whereas additionally maximizing the variety of addressable nodes with flatter topologies.

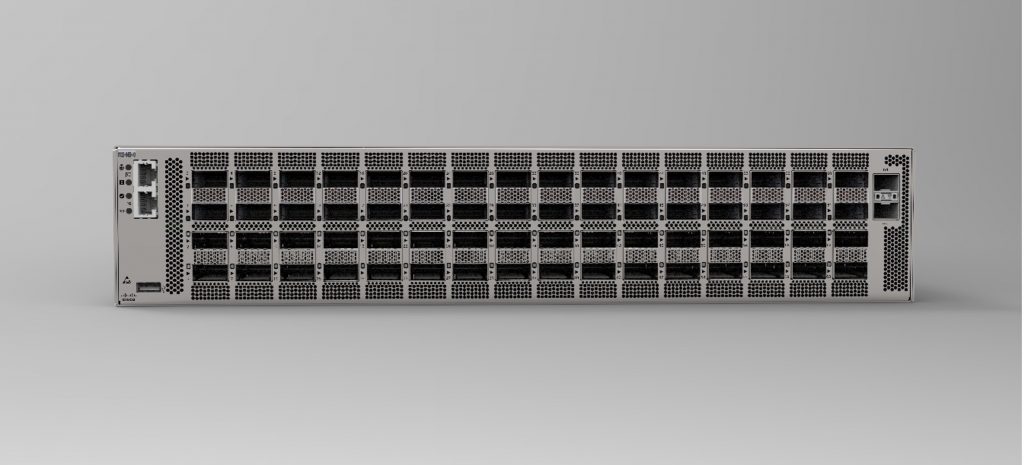

To deal with these wants, we’re introducing the Cisco 8122-64EH/EHF with help for 64 ports of 800G. This new platform is powered by the Cisco Silicon One G200—a 5 nm 51.2T processor that makes use of 512G x 112G SerDes, which allows excessive scaling capabilities in only a two-rack unit (2RU) type issue (see Determine 1). With 64 QSFP-DD800 or OSFP interfaces, the Cisco 8122 helps choices for 2x 400G and 8x 100G Ethernet connectivity.

Cisco Silicon One structure, with its absolutely shared packet buffer for congestion management and P4 programmable forwarding engine, together with the Silicon One software program growth equipment (SDK), are confirmed and trusted by hyperscalers globally. By means of main improvements, the Cisco Silicon One G200 helps 2x the efficiency and energy effectivity, in addition to decrease latency, in comparison with the previous-generation system.

With the introduction of Cisco Silicon One G200 final yr, Cisco was first to market with 512-wide radix, which can assist cloud suppliers decrease prices, complexity, and latency by designing networks with fewer layers, switches, and optics. Developments in load balancing, link-failure avoidance, and congestion response/avoidance assist enhance job completion occasions and reliability at scale for higher AI workload efficiency (see Cisco Silicon One Breaks the 51.2 Tbps Barrier for extra particulars).

The Cisco 8122 helps open community working techniques (NOSs), corresponding to Software program for Open Networking within the Cloud (SONiC), and different third-party NOSs. By means of broad software programming interface (API) help, cloud suppliers can use tooling for administration and visibility to effectively function the community. With these customizable choices, we’re making it simpler for hyperscalers and different cloud suppliers which can be adopting the hyperscaler mannequin to fulfill their necessities.

Along with scaling out back-end networks, the Cisco 8122 may also be used for mainstream workloads in front-end networks, corresponding to e mail and net servers, databases, and different conventional functions.

Bettering buyer outcomes

With these improvements, cloud suppliers can profit from:

- Simplification: Cloud suppliers can streamline networks by decreasing the variety of platforms wanted to scale with high-capacity compact techniques, in addition to relying on fewer networking layers and optics and fewer cabling. Complexity may also be diminished by way of fewer platforms to handle, which can assist decrease operational prices.

- Flexibility: Utilizing an open platform permits cloud suppliers to decide on the community optimization service (NOS) that most accurately fits their wants and permits them to develop customized automation instruments to function the community by way of APIs.

- Community velocity: Scaling the infrastructure effectively results in fewer potential bottlenecks and delays that might result in slower response occasions and undesirable outcomes with AI workloads. Superior congestion administration, optimized reliability capabilities, and elevated scalability assist allow higher community efficiency for AI/ML clusters.

- Sustainability: The facility effectivity of the Cisco Silicon One G200 can assist cloud suppliers meet knowledge middle sustainability targets. The upper radix helps cut back the variety of units by utilizing a flatter construction to raised management energy consumption.

The way forward for cloud community infrastructure

We’re giving cloud suppliers the pliability to fulfill vital cloud community infrastructure necessities for AI coaching and inferencing with the Cisco 8122-64EH/EHF. With this platform, cloud suppliers can higher management prices, latency, house, energy consumption, and complexity in each front-end and back-end networks. At Cisco, we’re investing in silicon, techniques, and optics to assist construct scalable, high-performance knowledge middle networks for cloud suppliers to assist ship high-quality outcomes and insights shortly with AI and mainstream workloads.

The Open Compute Mission (OCP) International Summit assembly is October 15–17, 2024, in San Jose. Come go to us in the neighborhood lounge to study extra about our thrilling new improvements; clients can signal as much as see a demo right here.

Share: